Content #

假定单独的IO请求需要的Latency为10ms,理论上讲1秒钟里磁盘可接受100个IO请求。这么计算的前提是这100个请求是依次发过来的。

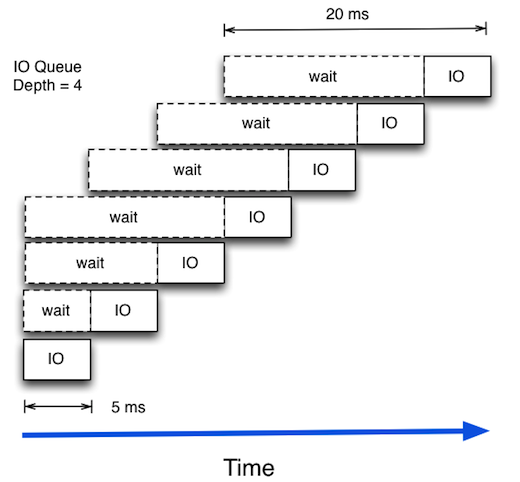

在磁盘处理某个请求的过程中,再来新的IO请求,那它只能等待,等待的时间也要算入Latency。现实中应用程序的IO请求会是随机的,因此,即使磁盘能够在 10ms中处理掉单个的IO请求,但从应用程序来看单个IO的Latency会超过10ms。

如果增加Read I/O队列,那么平均Latency会增加,IOPS也会增加。

How is that possible? How can a disk drive suddenly do more random IOPs at the cost of latency? The trick lies in that the storage subsystem can be smart and look at the queue and then order the I/Os in such a way that the actual access pattern to disk will be more serialised. So a disk can serve more IOPS/s at the cost of an increase in average latency. Depending on the achieved latency and the performance requirements of the application layer, this can be acceptable or not.

From #

https://louwrentius.com/understanding-iops-latency-and-storage-performance.html